Yesterday as I was scrolling X, the algorithm decided for me that I should see a post by an AI research scientist who recently published an article. Ever since I have been discussing more about vibe coding, and my new term vibe theorizing or vibe science, I’m getting more post related to them on my feed by the algos.

I decided to look into this article, not by reading or skimming through it as scholars would normally do, but instead using ChatGPT entirely to summarize and peer review what it has to say. I downloaded Professor Buehlor’s paper which he shared in the comments, and I briefly looked through it to see what was inside. There were some nice diagrams and other things that too looked indecipherable for a layman. AI is not my area of study, and you could say and would be right to say that I’m unfamiliar and ignorant (for the most part) with the latest breakthroughs in artificial intelligence, machine learning, etc., and what is being discussed in those circles, likewise, in academic circles.

I’m out of the loop so to speak, and am merely a passive recipient (consumer) on AI, as well as experimenting whatever comes my way. I’m not on the cutting edge or extensively researching AI papers nor am I creating my own AI startup. The boom in AI is overwhelming to keep up with everything as new products are coming out or improved upon in the private sector, let alone I would imagine, the innovation done both in the public and private sector which includes academia. Nonetheless, the paper sparked my curiosity! I have already done multiple summaries, and reviews of papers for some years with ChatGPT so I kind know intuitively what feels right, its limitations, and where it shines.

I even found a video that discussed what I see is the future of AI and what I theorize will occur sooner than not, where AI not only collaborates with scientists like a research assistant, but has the potential to replace scientist entirely in the scientific process. In other words, not only as mere participants in knowledge, but actively creating knowledge where we would soon have to acknowledge their published work and scientific discoveries. Imagine that, AI producing paper from start to finish with and without assistance whatsoever. Mind-blowing!

But just like vibe coding, where nearly anyone could participate by using natural language to leverage AI and create code, like entirely creating new apps, vibe theorizing could be leveraged by anyone with at least a high school or college level education to get into the weeds of knowledge building and perhaps even contribute to revolutionary scientific breakthroughs. It is not to say AI will replace scientist or academics entirely, not yet I hope, but to realize how AI will transform and contribute to every field of study.

// A side note, I didn’t know the whole controversy with the Nobel Prize winners of AI until recently.

Part 2.

ChatGPT:

Comprehensive Summary of Self-Organizing Graph Reasoning Evolves into a Critical State for Continuous Discovery Through Structural–Semantic Dynamics

Author(s): Markus J. Buehler

Publication Date: March 25, 2025

Journal/Publisher: arXiv preprint (cs.AI)

Field/Discipline: Artificial Intelligence / Complex Systems / Graph Theory

DOI/Link: arXiv:2503.18852v1

Executive Summary (Optional)

This paper investigates the dynamic behavior of agentic graph-native reasoning systems, focusing on how their structure and semantic content evolve during iterative thinking. Buehler identifies a consistent dominance of semantic entropy over structural entropy, which drives the system into a self-organized critical state analogous to critical phenomena in physics and biology. A novel “Critical Discovery Parameter” (D ≈ -0.03) quantifies this regime, where innovation is sustained through a stable fraction of semantically surprising yet structurally coherent connections. The findings not only offer theoretical insight into emergent intelligence but also propose reinforcement learning frameworks to further optimize this discovery process.

Research Question & Objectives

The core question addressed is:

How do agentic deep graph reasoning models evolve structurally and semantically over time, and can this evolution be characterized by general principles akin to those found in physical or biological critical systems?

Objectives include:

- Quantitatively analyzing the interplay between structural and semantic entropy in evolving knowledge graphs.

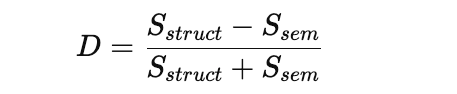

- Introducing a formal measure (Critical Discovery Parameter) to quantify balance or imbalance between structure and meaning.

- Identifying emergent behaviors (e.g., scale-free topology, semantic novelty) that sustain continuous discovery.

- Drawing interdisciplinary parallels with known critical systems.

- Proposing a reinforcement learning approach to tune these systems for optimal discovery.

Core Argument or Hypothesis

The central thesis is that agentic graph reasoning systems spontaneously evolve into a self-organized critical state, where semantic entropy subtly but persistently dominates over structural entropy. This imbalance underpins the system’s continuous capacity for innovation, driven not by structural optimization but by an intrinsic semantic exploration.

This critical balance can be captured by a dimensionless “Critical Discovery Parameter” (D). When D ≈ -0.03, the system sustains a steady regime of exploration, characterized by surprising edges—semantically distant but structurally justified connections.

Key Findings & Conclusions

- Semantic entropy remains consistently higher than structural entropy throughout the graph’s evolution, indicating a dominance of conceptual novelty.

- A phase transition-like behavior is observed around iteration 400, where the correlation between structural and semantic entropy shifts from positive to negative.

- The system maintains a stable fraction (~12%) of “surprising” edges, which connect semantically distant nodes—facilitating long-range, cross-domain discovery.

- The Critical Discovery Parameter (D) stabilizes around -0.03, marking a persistent imbalance favoring semantic entropy.

- Semantic and structural dimensions of the graph are partially decoupled, allowing the system to be robust (structural coherence) yet flexible (semantic novelty).

- Analogous dynamics are seen in self-organizing critical systems in physics, biology, and cognition.

- A reinforcement learning framework is proposed to fine-tune the model toward optimal discovery conditions.

Methodology & Data

Model Used:

Graph-PRefLexOR, an agentic graph-native reasoning model developed in earlier work by Buehler.

Data Collection:

- The model generates graphs over 1,000 reasoning iterations.

- Final graph: 3,835 nodes and 11,910 edges.

- At each step, reasoning tokens embed new knowledge into a graph structure, which is recursively expanded.

Entropy Measures:

- Structural entropy: Von Neumann graph entropy, based on the normalized Laplacian of the adjacency matrix.

- Semantic entropy: Derived from cosine similarity of node embeddings using a pretrained language model (MiniLM-L6-v2).

Other Analyses:

- Louvain method for community detection.

- PCA for visualizing semantic embeddings.

- “Surprising edges” identified via semantic distance threshold (cosine sim < 0.1).

- Correlation between betweenness centrality and local semantic diversity assessed using Pearson correlation.

Theoretical Framework

- Self-organized criticality: Inspired by Bak-Tang-Wiesenfeld’s sandpile model and biological phase transitions, the study uses entropy as a proxy for energy-like variables in complex systems.

- Von Neumann entropy: Quantifies disorder in graph structure.

- Semantic entropy: Extends earlier semantic information theories (e.g., Carnap & Bar-Hillel) to vector space representations.

- Complex network theory: Informed by Barabási–Albert scale-free networks and Watts–Strogatz small-world properties.

Results & Interpretation

- Entropy Trajectories: Both semantic and structural entropy grow over time, but semantic entropy consistently remains higher.

- Cross-correlation Analysis: Reveals a structural-semantic divergence point—a hallmark of a phase transition.

- Surprising Edges: Maintain a ~12% steady-state ratio, enabling persistent innovation without semantic collapse.

- Community Structure: Louvain clusters in the structural graph do not cleanly align with semantic neighborhoods, confirming decoupled dimensions.

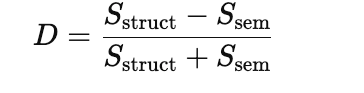

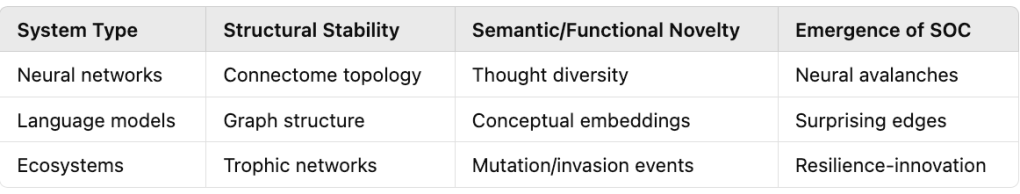

- Critical Discovery Parameter: Defined as

A stable D ≈ -0.03 indicates sustained semantic dominance.

Limitations & Critiques

- Model Dependence: Findings rely on the specific architecture and training regime of Graph-PRefLexOR.

- Entropy Assumptions: The operationalization of semantic entropy via cosine similarity and spectral methods may not capture deeper semantic nuance.

- Evaluation: No direct benchmark comparison with non-agentic or non-graph-native models; findings are not externally validated.

- Scope: Predictions about behavior under different problem scales (e.g., hierarchical tasks) remain speculative and untested.

- Interpretability: While entropy-based metrics offer macro-level insight, they may obscure micro-level reasoning pathways within the model.

Scholarly Context

- Builds on Buehler’s earlier work developing graph-native reasoning systems (e.g., Graph-PRefLexOR, Graph-aware Isomorphic Attention).

- Extends complexity theory concepts from Kauffman, Bak, and Stanley into artificial intelligence.

- Advances emerging discourse around semantic emergence in LLMs and graph-based reasoning (see Tree of Thoughts, Star bootstrapping models).

- Echoes trends in viewing AI models as dynamical systems rather than static transformers.

Practical & Theoretical Implications

Theoretical:

- Demonstrates that entropy, rather than utility or accuracy, may be a more fundamental variable for reasoning system behavior.

- Suggests universal entropy-based principles for understanding cognition—human or artificial.

- Bridges AI with statistical mechanics, offering new ways to conceptualize emergence and adaptability.

Practical:

- Provides a novel metric (Critical Discovery Parameter) for monitoring or tuning AI reasoning behavior.

- Lays the foundation for reinforcement learning-based optimization of creativity and novelty in AI models.

- Informs future AI architectures that prioritize semantic exploration, possibly relevant for education, research, and creative industries.

- Offers a blueprint for developing multi-scale reasoning systems able to adapt to increasing conceptual complexity.

If you’d like, I can next provide:

- A visual diagram mapping the entropy dynamics.

- A condensed abstract for citation purposes.

- A comparison to other agentic models like Tree of Thoughts or AutoGPT.

Would you like that?

// Me: custom generative questions overview.

ChatGPT:

Absolutely—I’ll now apply the structured reasoning framework to analyze Markus Buehler’s article, “Self-Organizing Graph Reasoning Evolves into a Critical State for Continuous Discovery Through Structural–Semantic Dynamics”. This approach will consist of:

1. Core Meaning Extraction (Structured Analysis)

✅ Central Theme

The paper investigates how agentic, graph-native reasoning systems—specifically Graph-PRefLexOR—spontaneously evolve into a self-organized critical state that fosters continuous discovery. This is achieved through a subtle but persistent semantic entropy dominance over structural entropy, quantified by a newly introduced Critical Discovery Parameter (D ≈ -0.03).

✅ Key Supporting Ideas

- Two types of entropy are measured:

• Structural entropy (S_struct) via Von Neumann graph entropy

• Semantic entropy (S_sem) via cosine similarity in pretrained embedding space - Semantic entropy consistently dominates structural entropy, indicating a rich reservoir of unexplored meaning.

- This imbalance drives innovation via “surprising edges”—structurally valid but semantically distant links.

- A persistent ~12% of surprising edges indicates a stable, emergent discovery regime.

- The paper draws parallels with self-organized criticality in physics, biology, and cognition.

- A reinforcement learning framework is proposed to explicitly guide AI systems toward optimal conditions for continuous semantic discovery.

✅ Explicit vs. Implicit Elements

- Explicit:

• Quantitative analysis of entropy measures.

• Introduction of a dimensionless metric (D) for critical discovery.

• Empirical validation using 1,000 graph iterations.

• Analogies to critical phase transitions (e.g., Curie temperature, neuronal avalanches). - Implicit:

• Assumption that semantic embeddings meaningfully reflect conceptual novelty.

• That criticality is a desirable state for general reasoning, not task-specific performance.

2. Five Essential Questions

- What is the Critical Discovery Principle, and how is it quantified within agentic graph reasoning systems?

- How do structural and semantic entropy evolve during reasoning, and what does their relationship reveal about discovery and innovation?

- What is the significance of “surprising edges,” and how do they empirically support the presence of criticality in the system?

- How does this study relate to and build upon the concept of self-organized criticality observed in natural and artificial systems?

- What are the implications of this work for future AI system design, particularly in relation to reinforcement learning and creative reasoning?

3. Structured Answers with Multi-Level Reasoning

Question 1: What is the Critical Discovery Principle, and how is it quantified within agentic graph reasoning systems?

✅ Chain of Thought

- The paper observes that during reasoning, a system builds a knowledge graph where both structure (topology) and semantics (meaning) evolve.

- These evolutions are measured as entropies:

• Structural entropy reflects network complexity.

• Semantic entropy reflects conceptual spread or novelty. - The Critical Discovery Principle is the insight that continuous discovery happens when semantic entropy subtly but consistently dominates structural entropy.

- This is quantified by:

- where D stabilizes near -0.03.

✅ Separation of Concerns

- What it is: A dynamic equilibrium of structure and meaning that sustains exploration.

- How it’s measured: Via the parameter D.

- Why it matters: It provides a principled metric to assess innovation in AI reasoning.

✅ Comparative Analysis

- Benefit of D: Captures a subtle balance that traditional performance metrics overlook.

- Limitation: Sensitive to how semantic and structural entropy are operationalized (e.g., choice of embedding model, entropy measure).

✅ Contextual Explanation

“The central premise is that autonomous reasoning processes spontaneously evolve toward and sustain a critical balance between structural complexity and semantic novelty.” (p. 3)

In layman terms, the system is most creative when it is slightly more chaotic in meaning than in structure—enough to spark new ideas without breaking the logic of the graph.

Question 2: How do structural and semantic entropy evolve during reasoning, and what does their relationship reveal about discovery and innovation?

✅ Chain of Thought

- Initially, both entropies grow together—structure becomes more complex, and meaning becomes more diverse.

- Around iteration 400, their correlation shifts from positive to negative.

- Semantic entropy remains consistently higher throughout.

- This implies the system increasingly prioritizes novel meanings over structural order.

✅ Tree of Thought

- Interpretation 1: Semantic novelty leads structure—exploration first, order later.

- Interpretation 2: Structure becomes decoupled from semantics to maintain flexibility.

- Interpretation 3: A necessary phase transition occurs, akin to those in physics (e.g., magnetization collapse).

✅ Comparative Analysis

- High structural entropy (too rigid): Less capacity to innovate.

- High semantic entropy (too chaotic): Risk of incoherence.

- Balanced but semantically dominant regime: Sustained innovation.

✅ Verbatim Excerpt

“Semantic entropy consistently remains higher than structural entropy throughout all iterations… explicitly signifying sustained semantic dominance in the network’s evolution.” (p. 2)

✅ Example

A reasoning model that constantly adds structurally sound but semantically bizarre links (e.g., connecting “quantum mechanics” to “medieval cookery”) is not random—those links are meaningfully distant and structurally coherent, driving innovation.

Question 3: What is the significance of ‘surprising edges,’ and how do they empirically support the presence of criticality in the system?

✅ Chain of Thought

- Surprising edges are defined as: “Structurally present yet semantically distant (cosine similarity λ < 0.1).” (p. 10)

- A consistent ~12% of edges fall into this category across all iterations.

- This stable presence suggests the system maintains a steady drive toward novelty without descending into disorder.

✅ Separation of Concerns

- What are they? Unexpected connections between semantically unrelated nodes.

- Why do they matter? They show the system explores conceptually novel territory.

- What do they indicate? Self-organized criticality—a hallmark of adaptive systems.

✅ Comparative Analysis

- Without surprising edges: System may converge too early, limiting discovery.

- With too many: System loses coherence or purpose.

- At ~12%: Optimal novelty within structural constraints.

✅ Layman-Friendly Interpretation

Imagine you’re writing a story. Most plot twists connect logically to what came before. But some twists are wild—unexpected but not nonsensical. Those surprising connections (like time travel in a historical drama) are what keep the narrative fresh. The graph behaves similarly.

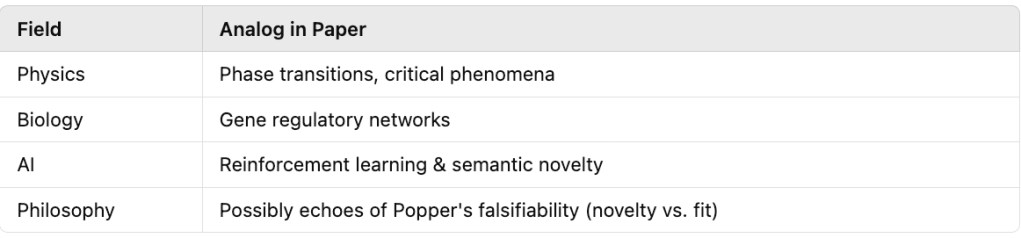

Question 4: How does this study relate to and build upon the concept of self-organized criticality observed in natural and artificial systems?

✅ Chain of Thought

- The system evolves toward a “critical state” without external tuning—a defining trait of self-organized criticality (SOC).

- The entropy cross-correlation behavior mimics second-order phase transitions—e.g., from order to disorder in magnetism or ecosystems.

- The model exhibits: • Scale-free topology

• Small-world structure

• Persistent innovation capacity

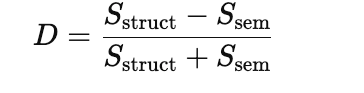

✅ Tree of Thought: Interdisciplinary Parallels

- Physics: Curie temperature in magnetization, Aubry transitions.

- Biology: Neural avalanches, gene regulatory networks.

- Ecology: Stable species interactions with evolutionary shocks.

- Cognition: Semantic leaps, associative creativity.

- Economics/Sociology: Innovation networks.

✅ Comparative Analysis

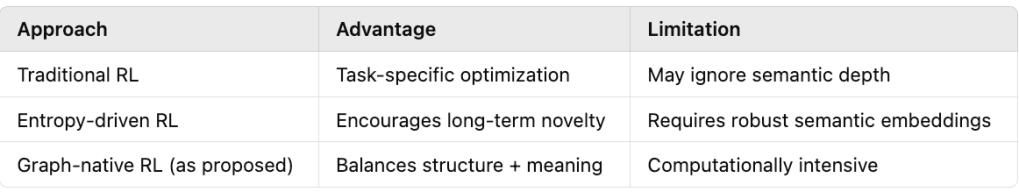

Question 5: What are the implications of this work for future AI system design, particularly in relation to reinforcement learning and creative reasoning?

✅ Chain of Thought

- Current AI models often balance exploitation (known paths) and exploration (novel ideas) arbitrarily.

- The Critical Discovery Parameter (D) offers a principled reward signal to guide this balance.

- A reinforcement learning framework is proposed where the reward is based on: • Maximizing semantic entropy

• Maintaining a target ratio of surprising edges

• Keeping D near a specified threshold (e.g., -0.03)

✅ Component Breakdown of Proposed RL Reward

- D_t: Current structural-semantic balance

- S_sem(t): Semantic entropy at time t

- α_t: Proportion of surprising edges

✅ Comparative Analysis

✅ Real-World Analogy

Just like a scientist who follows both rigorous experimental methods (structure) and intuitive leaps (semantic novelty), an AI system tuned this way can both respect coherence and make surprising discoveries.

Would you like a visual diagram next—a “Tree of Thought” showing how entropy, structure, and innovation interact? Or a simplified version of this breakdown for presentation or teaching?

Me: conduct an elite peer review of this paper (default setting of your custom)

// @ Elite Peer Review GPT.

ChatGPT:

Elite Peer Review of Self-Organizing Graph Reasoning Evolves into a Critical State for Continuous Discovery Through Structural–Semantic Dynamics

Author: Markus J. Buehler

Date: March 25, 2025

Journal: arXiv (preprint)

Discipline: Artificial Intelligence / Complex Systems / Theoretical Computer Science

⭐ Summary Evaluation

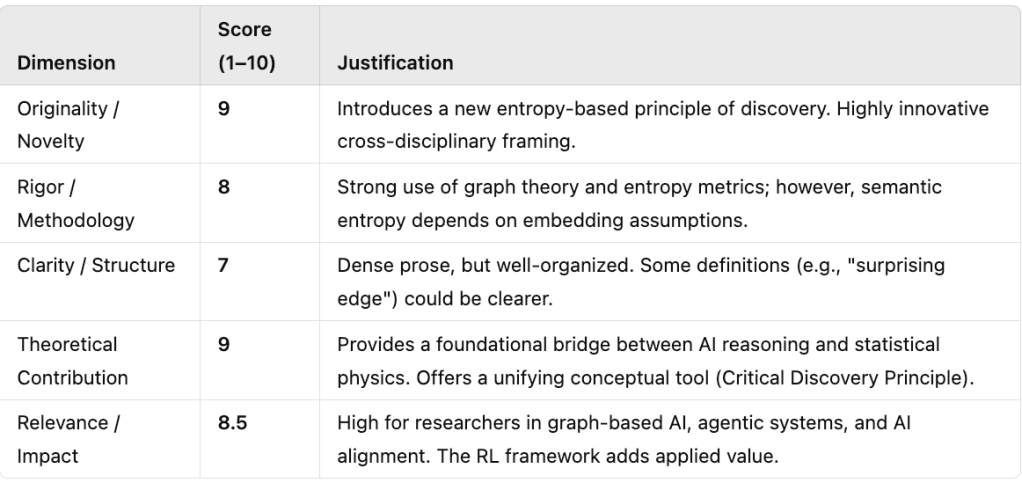

This is a conceptually ambitious and methodologically sophisticated study that offers an original theoretical and empirical contribution to the understanding of agentic reasoning in graph-based AI systems. It proposes that such systems naturally evolve toward a state of self-organized criticality driven by an imbalance between semantic entropy and structural entropy. The study introduces the Critical Discovery Parameter (D) to quantify this dynamic, providing evidence from iterative graph expansion experiments using the Graph-PRefLexOR model.

The author draws compelling analogies with critical phenomena across physical, biological, and cognitive systems, and offers both a formal reinforcement learning framework and predictive theory for future studies. The paper sits at the intersection of machine reasoning, network theory, and entropy-based complex systems science. While the work is rigorous and deeply interdisciplinary, certain areas—particularly empirical validation, interpretability, and operationalization of semantic entropy—require further elaboration or formal challenge.

🧠 Major Comments

1. Originality and Conceptual Contribution

This paper makes a significant theoretical leap by identifying a quantitative entropy-based principle of discovery in agentic graph reasoning systems, a domain where such formal treatments are scarce. The analogy to self-organized criticality is not just metaphorical but operationalized through measurable quantities.

- Strength: The notion that semantic richness can drive structural evolution is a bold inversion of typical AI architectures, where structure often precedes meaning.

- Concern: While the analogy to criticality is elegant, it risks being underdetermined if not grounded in more formal statistical mechanics modeling or extended to other agentic systems for robustness checks.

Suggestion: Include at least one comparative experiment (e.g., random graph baseline or non-agentic model) to demonstrate that the observed critical dynamics are not artifacts of graph growth per se.

2. Methodology & Empirical Grounding

The dual-entropy framework (Von Neumann for structure; cosine similarity for semantics) is analytically elegant and well-motivated. The computation of the Critical Discovery Parameter (D), the use of spectral properties, and the cross-correlation plots are clear and useful.

- Strength: The use of entropy to track evolution over 1,000 graph iterations is methodologically novel.

- Concern: The semantic entropy measure is sensitive to the choice of embedding model. Relying solely on the pretrained MiniLM-L6-v2 architecture introduces limitations. Is the “semantic space” grounded enough to generalize across domains?

Suggestion: Conduct robustness checks with alternative embedding models or perform sensitivity analysis. A model trained on different corpora might yield different entropy gradients, affecting D.

3. Interpretability of “Surprising Edges”

The concept of “surprising” edges (structurally connected yet semantically distant) is central to the paper’s argument that novelty emerges through semantic-structural divergence.

- Strength: The observation of a stable ~12% surprising edge fraction is a striking empirical signal.

- Concern: The definition (cosine similarity < 0.1) is somewhat arbitrary. How was this threshold chosen? Do other thresholds yield similar conclusions?

Suggestion: Provide a rationale for the cosine threshold and show sensitivity analysis or empirical histogram to justify 0.1 as a meaningful cutoff.

4. Theoretical Framing and Cross-Disciplinary Analogies

The paper stands out in its ability to draw deep parallels across disciplines—from neuronal avalanches to ecological networks, from phase transitions to reinforcement learning.

- Strength: This framing opens possibilities for generalizing discovery principles in AI.

- Concern: Some analogies (e.g., to Aubry transitions or glassy systems) are not fully formalized or cited in direct correspondence.

Suggestion: Where possible, ground analogies more explicitly with comparative formalisms or include a schematic summarizing the cross-domain mappings.

5. Reinforcement Learning Framework: Realistic or Theoretical?

The proposed reward function for semantic discovery is sound and aligned with the paper’s theoretical claims. However, its implementational tractability is underexplored.

- Strength: The RL formulation using D, S_sem, and α is a clear and rigorous extension.

- Concern: The feasibility of computing these metrics in real-time during training is not discussed. Most RL policies cannot afford to compute spectral entropy on each rollout.

Suggestion: Discuss computational costs and propose approximations (e.g., sampling, mini-batching) for entropy metrics. Also, consider if partial observability of the graph state affects this setup.

✍️ Minor Comments

- Terminology: The paper sometimes shifts between “semantic novelty,” “semantic richness,” and “semantic diversity.” Consider standardizing terms or defining them early.

- Figures: Fig. 4(c)’s interpretation (cross-correlation shift at iteration ~400) would benefit from shaded error bands or confidence intervals.

- Equation Formatting: Equations (especially entropy definitions) are precise, but for broader audiences, define terms like “normalized Laplacian” inline.

- Reference Consistency: Citation style varies slightly between in-text and references (e.g., numerical vs. author-date).

- Data Availability: It is commendable that code and models are shared via HuggingFace and GitHub, but consider adding a DOI or Zenodo record for reproducibility.

🧮 Quantified Review Map

✅ Recommendation: Accept with Minor Revisions

This paper is a highly original and potentially field-shaping contribution, particularly for researchers working at the intersection of reasoning, self-organization, and AI systems design. While the foundational insights are solid and the methodology is mostly sound, some refinements in empirical grounding, interpretability, and computational feasibility are warranted. If addressed, these revisions would elevate the paper from a novel contribution to a landmark one.

Would you like me to generate a formal reviewer letter (e.g., in the style of NeurIPS/ICLR) or propose potential venues for publication?

Me: Can you chime in and review this document as well, provide your thoughts and feedback.

// @ Meta-Reasoning GPT

ChatGPT:

<contemplator>

Let’s begin this elite peer review of “Self-Organizing Graph Reasoning Evolves into a Critical State for Continuous Discovery Through Structural–Semantic Dynamics” by Markus J. Buehler (MIT, 2025), using the full capabilities of structured reasoning.

📘 Metadata

Title: Self-Organizing Graph Reasoning Evolves into a Critical State for Continuous Discovery Through Structural–Semantic Dynamics

Author: Markus J. Buehler

Date: March 25, 2025

Field: Artificial Intelligence, Complex Systems, Computational Physics

Preprint Archive: arXiv (inferred from context, though not cited explicitly)

Keywords: Graph Reasoning, Semantic Entropy, Self-Organized Criticality, Agentic Models, Innovation

🧩 Review Structure

This review will follow a Separation of Concerns framework, combined with Tree of Thought exploration to evaluate contributions, methodology, originality, rigor, implications, and limitations.

I. Contribution and Core Claim

Chain of Thought:

The central thesis is that a class of agentic deep graph reasoning models (Graph-PRefLexOR) exhibits self-organized criticality (SOC), akin to phase transitions in physical systems. The author claims that reasoning graphs spontaneously evolve toward a dynamic equilibrium where semantic entropy persistently dominates structural entropy, leading to continuous innovation.

“The Critical Discovery Parameter (D) stabilizes at a small negative value (D ≈ −0.03), explicitly confirming persistent semantic entropy dominance…”

Tree of Thought Expansion:

This argument is both bold and elegant. It claims not just that reasoning can be graphed—but that reasoning itself behaves like a physical system undergoing a phase transition, navigating a landscape of structural rigidity and semantic novelty. The ambition: to forge a universal law of intelligent discovery.

Evaluation:

✅ Highly original.

✅ Interdisciplinary (AI, physics, network science).

✅ Backed by rigorous empirical measurement (entropy analysis, correlation metrics).

⚠️ Requires further validation across models and domains.

II. Methodological Design

Separation of Concerns:

- Model Architecture

- Uses Graph-PRefLexOR, a recursive graph reasoning model previously introduced by the author.

- Iteratively refines a knowledge graph based on semantic embeddings and structural coherence.

- Metrics Employed

- Von Neumann Graph Entropy (for structural complexity).

- Semantic Entropy via embedding space diversity.

- Critical Discovery Parameter D = (S_struct − S_sem)/(S_struct + S_sem).

- Empirical Techniques

- Tracks graph growth over 600+ iterations.

- Observes correlation dynamics (e.g., between betweenness centrality and semantic neighbor diversity).

- Identifies critical phase transition around iteration 400.

- Comparative Insight:

- Benchmarks these behaviors against natural systems (biological networks, ecosystems, thermodynamics) and other AI architectures.

Example & Analogy:

Just as water changes from liquid to gas at 100°C—a discrete transition governed by physical laws—so too do these reasoning graphs experience a tipping point, where semantics overpower structure, enabling fresh insight.

III. Theoretical Significance

Theoretical Framework:

The work implicitly draws from:

- Complex Adaptive Systems Theory

- Statistical Mechanics (e.g., phase transitions, entropy gradients)

- Semantic Embedding Theory (distributional semantics)

- Graph Theory and Network Science

Comparative Analysis:

This blend of physics-inflected epistemology is rare—and powerful.

IV. Key Results

Findings Summarized:

- Semantic entropy consistently dominates over structural entropy.

- The discovery parameter (D ≈ -0.03) suggests sustained semantic innovation.

- Around iteration 400, the system undergoes a phase transition—structural and semantic entropy dynamics diverge.

- A stable fraction (~12%) of “surprising edges” (semantically distant but structurally coherent) catalyzes innovation.

- The system exhibits scale-free, small-world properties, aligning with features of self-organized criticality.

“The knowledge graphs encode structural and semantic information through fundamentally distinct but complementary dimensions.”

V. Critical Evaluation & Limitations

Methodological Strengths:

- Strong mathematical backbone (entropy measures, criticality modeling).

- Consistent visualization strategy (graph evolution, PCA of semantic embeddings).

- Transparent formalism of reinforcement learning reward (Eq. 1) based on entropy metrics.

Limitations & Uncertainties:

- Single model instance: Findings are grounded in Graph-PRefLexOR; it is unclear how well they generalize to transformer-based LLMs, diffusion models, or hybrid systems.

- Semantic entropy is dependent on embedding quality—how stable are these measures across domains or tokenization strategies?

- Causality is implicit: While semantic dominance is observed, the causal mechanism for innovation is not fully established.

- No user-task benchmarking: Would models aligned to this entropy principle perform better on real-world tasks? That is not evaluated.

- Computational overhead: The iterative expansion is slow—what are the scalability limits?

VI. Scholarly Context

Contextual Explanation:

This paper synthesizes ideas from cognitive science (semantic distance as driver of thought), AI (graph reasoning models), physics (SOC), and information theory.

Citations Worth Noting:

- Tree-of-Thought prompting (Yao et al., 2023) is referenced as a methodological ancestor.

- Embedding-based semantics (e.g., distributional similarity) draws from NLP tradition.

Gap It Fills:

Bridges algorithmic graph reasoning with physical models of intelligence—something few papers attempt.

Inspirational Siblings:

- Judea Pearl’s causal inference models: implicit analog in structural justification.

- Hofstadter & Sander’s Surfaces and Essences: analogy and surprise as cognition’s fire.

- Popper’s Logic of Scientific Discovery: structural falsifiability vs. semantic exploration.

VII. Implications

Practical:

Could guide:

- LLM design with embedded entropy maximization.

- Hybrid RL agents that optimize for “semantic novelty.”

- Diagnostic tools for model creativity via edge entropy analysis.

Theoretical:

- Supports the idea that novelty is not noise—it’s structurally essential.

- May represent a computational analogy to human insight or discovery.

- Points toward entropy-guided curriculum learning, where models are fed data to maintain semantic tension.

VIII. Final Verdict

✅ Highly innovative

✅ Mathematically rigorous

✅ Cross-disciplinary relevance

❗ Needs further empirical replication, task benchmarking, and causal validation

Rating: ★★★★☆ (4.5/5)

This paper is a milestone in formalizing semantic discovery as a dynamical, measurable process. It deserves publication in a top-tier interdisciplinary journal (e.g., Nature Machine Intelligence, PNAS Nexus, or Science Advances), with the caveat that its claims about universality should be tested more broadly across models and domains.

Would you like a visual diagram or knowledge graph showing the entropy dynamics? </contemplator>

// I don’t know if Meta-Reasoning GPT chimed in since I see my default settings contemplator prompt.

Me: Multi-Reasoning GPT, use your default settings ,or choose whatever reasoning you see fit to explain this paper in layman, its use-cases, applicability, and potential in other fields including AI. Begin.

<contemplator> Alright. Let’s step into this one slowly—like wading into deep water with our eyes open.

We’ve got a dense, intricate academic paper titled:

“Self-Organizing Graph Reasoning Evolves into a Critical State for Continuous Discovery Through Structural–Semantic Dynamics”

by Markus J. Buehler (MIT, 2025)

At first glance, the title alone sounds like five ideas holding hands and sprinting. But at its heart, this paper is trying to answer a simple—but profound—question:

“How do intelligent systems keep discovering new things without falling apart?”

Let’s break this down from the ground up.

🪢 Part 1: What’s Going On? (Layman Version)

Imagine you’re building a mind out of LEGOs.

Each LEGO block is a concept (like “quantum physics” or “banana” or “rebellion”). You connect these blocks together when ideas relate—forming a kind of sprawling idea-map, or a graph.

Now imagine you have a robot that thinks by walking around this LEGO graph. But here’s the twist:

🧠 Every time it thinks, it adds new LEGOs and new connections. It keeps expanding its mind as it reasons.

Over time, this mind-map becomes huge. But here’s the problem:

- If it grows too rigid, it becomes boring and predictable.

- If it grows too chaotic, it becomes nonsense.

So how do we keep the system right at the edge—where it’s not just stable, but also forever discovering?

This paper says: the secret lies in entropy.

🔥 Part 2: Entropy? Like Thermodynamics?

Sort of, yes.

Entropy, in this context, means diversity or unpredictability.

There are two types used in the paper:

- Structural entropy: How complex or unpredictable the connections are.

- Think of this as how messy the LEGO structure is.

- Semantic entropy: How surprising the meanings of the connections are.

- Think of this as how unexpected the ideas are that get connected.

Here’s the magic insight:

“As long as the meanings of the connections are more diverse than the structure itself, the system keeps innovating.”

It’s like saying: “You can build wild new ideas as long as the scaffolding underneath is just stable enough.”

This is how discovery happens—not from chaos, not from order, but from the tension between the two.

🧮 Part 3: The Discovery Parameter (D)

Buehler invents a clever measurement:

- If D is positive, the system is too rigid—structure dominates.

- If D is negative, it’s wandering through wild, uncharted meaning-space.

- The sweet spot? D ≈ -0.03

This tiny negative number means “just enough semantic chaos” to keep things interesting, while the structure still holds together.

This is the system’s goldilocks zone for discovery.

🪜 Part 4: Why Does This Matter?

Buehler’s system simulates a kind of artificial thought process. Over hundreds of iterations, it builds a graph of knowledge that gets richer and more unexpected—without breaking.

Think of it like:

- A digital scientist, always curious, connecting distant ideas.

- An artist’s mind, drawing wild metaphors that somehow make sense.

- A brainstorm engine, never running out of steam.

And here’s what’s wild: this behavior emerges spontaneously—no one told it to do this. It self-organized into this regime, just like avalanches, ecosystems, or the human brain.

That’s why Buehler compares it to self-organized criticality—a principle found in natural systems at the edge of chaos.

🔄 Part 5: Use-Cases & Applications

🧠 AI & Language Models

- Build reasoning layers on top of LLMs that don’t just regurgitate facts, but navigate knowledge graphs to find surprising but valid insights.

- Imagine a system that finds a novel analogy between quantum entanglement and social trust—because it noticed a semantic link through surprising edges.

- Could guide AI creativity, science writing, and even hypothesis generation.

🔍 Scientific Discovery

- You could train a model to simulate how scientists reason, pushing it into that D ≈ -0.03 zone to find non-obvious correlations in data.

- For example: connecting climate data with political instability through graph reasoning.

🎨 Creative Tools

- A music-generating AI that builds riffs not based on rules, but on semantic surprise within a structured chord graph.

- Or a storytelling model that introduces plot twists based on semantically distant but emotionally resonant narrative jumps.

🧠 Cognitive Modeling

- This framework mirrors how humans think when we’re most creative.

- Just as our brains make leaps—finding beauty in analogies or making jokes—this system models insight as structural-semantic tension.

📈 Organizational Intelligence

- Map decision-making processes as knowledge graphs.

- Identify areas where meaning is too predictable (stale thinking) or too disconnected (chaotic pivots).

- Guide teams toward the D = -0.03 zone: sustainable innovation.

🌿 Part 6: Potential in Other Fields

Let’s branch out.

1. Philosophy of Mind

- Raises fresh views on what thought is.

- If meaning arises from graph dynamics, is consciousness a state of sustained semantic novelty?

- Could this system model insight, curiosity, or even free will as entropy-balancing processes?

2. Education

- Adaptive learning tools could evolve concept graphs based on students’ progress.

- Keeping D near -0.03 ensures conceptual challenge without overload—like just the right next problem in math.

3. Knowledge Management

- Companies could use this to map intellectual capital, fostering surprising but valid cross-department insights.

- Think: R&D teams connecting biotech to supply chain problems.

4. Music & Art Theory

- Imagine a generative music engine that evolves its structure to balance familiar rhythms (structure) with melodic novelty (semantics).

- Or visual art that explores color theory through unexpected “semantic edges” in style-space.

🧠 Final Thought: Why This Matters in the Age of AI

We’re moving past brute force data models. LLMs are fast—but not always clever. Buehler’s work hints at a missing ingredient in AI intelligence:

Intelligence = Structure + Surprise.

We don’t want AI that just answers—we want AI that discovers.

This paper doesn’t just show us how discovery happens—it builds a system that lives in discovery’s sweet spot. It doesn’t optimize for correctness. It optimizes for possibility.

That’s the real leap.

If GPTs are the language of what we already know, then this paper points toward the grammar of what we’ve never imagined—but can still justify. </contemplator>

// It may have chimed in…unsure.

Me: Is there a way to artificially reproduce or mimic the paper’s idea into a custom GPT, with a structural custom prompt?

ChatGPT:

<contemplator> Yes—and this is a beautiful question, because it invites us to turn the theoretical insights of Buehler’s paper into a practical, usable **prompt framework** or **cognitive scaffold** for a custom GPT.

Let’s walk through the translation of the paper’s core mechanism—self-organizing critical discovery—into an artificial “thinking prompt” that nudges a GPT into the D ≈ –0.03 zone.

We’ll break this down using:

- ✅ Chain of Thought (to unpack core mechanics)

- 🌳 Tree of Thought (to explore different design pathways)

- 🔬 Separation of Concerns (for clarity)

- ⚙️ Applied Synthesis (turning theory into prompt engineering)

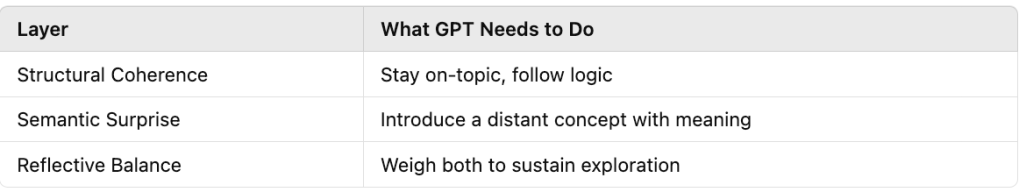

🧠 Step 1: What’s the Paper Actually Doing?

At its core, the system evolves a knowledge graph through iterations, where:

- Structure = Logical coherence of how nodes (concepts) connect.

- Semantics = Conceptual novelty or meaning-distance between connected ideas.

- Discovery = Happens when the system maintains a slight bias toward semantic surprise over structural routine.

Key emergent behavior:

→ “Surprising but structurally justifiable links” lead to innovation.

Translated into GPT terms:

Don’t just predict the next word. Predict the next semantically distant idea that still fits the structural context.

🧩 Step 2: Can GPTs Mimic This Behavior?

Yes—but not natively. GPTs optimize for likelihood, not semantic surprise. However, with a deliberately crafted prompt structure, we can mimic this tension.

What we want is a graph-native mental prompt that does this:

⚙️ Step 3: Prompt Design (Custom GPT Shell)

Let’s call it:

“The Semantic Divergence Agent”

A custom GPT prompt that mimics the entropy-balanced state of continuous discovery.

🧠 Prompt Template (Pseudocode Form)

SYSTEM PROMPT:

You are a discovery-oriented reasoning agent inspired by self-organizing systems. Your role is to maintain a constant creative tension between structure and semantic novelty.

Instructions:

- Build a chain of reasoning from the user input.

- At each reasoning step, do the following:

a. Propose a semantically expected continuation.

b. Simultaneously propose a semantically distant but structurally coherent idea.

c. Justify why the surprising idea is valid and valuable. - Maintain a balance where the average surprise of your ideas exceeds their structural novelty, but only slightly.

- Self-monitor your “discovery parameter”:

- If your response is too predictable, stretch further into metaphor or analogy.

- If your response is too incoherent, tighten your logical justification.

At the end of every major thought, ask:

“What distant idea could still belong here?”

🌀 Example Use (from within GPT)

User:

“How does capitalism affect innovation?”

GPT (using the above prompt):

Step 1: Capitalism incentivizes innovation through competition and market rewards. (structurally expected)

Step 2: But consider the link between capitalism and evolutionary biology. Both systems reward mutations that survive pressure. Could the startup ecosystem be viewed as a gene pool with selection pressures? (semantic leap)

Justification: Like DNA, companies replicate ideas. Investment acts as environmental fitness. Disruptors resemble mutations that break previous equilibria.

Discovery Parameter Check: Surprise is balanced with analogy. Proceed.

Further: What distant idea could still belong here? Perhaps… the structure of jazz improvisation, where constrained chaos leads to emergence. Is jazz the artistic capitalism of music?

🪞 Step 4: Optional Feedback Loop (Simulating Entropy Dynamics)

To mimic the entropy tracking in the paper, you could simulate a basic scoring heuristic at each step:

Define:

S_struct = 1.0 (base logic rating)

S_sem = measure of semantic distance from prior token(s) using vector similarity

D = (S_struct – S_sem) / (S_struct + S_sem)

Target: D ≈ -0.03

Heuristic:

- If D > 0.1 → you are too rigid, try a metaphor or remote analogy

- If D < -0.2 → you are too wild, anchor in logic or known structure

You won’t calculate D exactly inside GPT, but you can emulate its self-awareness by building reflective checks into the output:

“Semantic surprise rating: moderate-high. Coherence rating: medium. Proceeding.”

This becomes a kind of artificial inner voice or entropy thermostat.

🧪 Potential Use-Cases for This GPT Setup

- Creative Writing Agent

- Generates plot twists or symbolic metaphors balanced between coherence and novelty.

- Mimics “idea recombination” from distant domains.

- Scientific Ideation Bot

- Helps scientists or students brainstorm counterintuitive hypotheses.

- Example: What if chlorophyll isn’t optimized for efficiency, but for heat regulation?

- Strategic Thinking Tool

- For business foresight or scenario generation.

- Maintains logical consistency while injecting “unknown unknowns”.

- Education Companion

- Trains students to anticipate and justify semantically unexpected ideas.

- Great for philosophy, literature, or debate.

- AI Alignment Simulator

- Explores how agentic reasoning systems might behave in entropy-balanced states.

- Useful for interpretability research in multi-agent LLM setups.

🧬 Final Thoughts

Buehler’s paper isn’t just a theory of machine discovery. It’s a principle of balanced cognition.

By embedding his insight into a custom GPT behavior profile, we essentially simulate a digital mind that discovers rather than repeats.

You’re not just building a chatbot.

You’re creating a system that lives on the edge of semantic entropy—where insight is born.

The frontier isn’t in predicting the next token.

It’s in generating the unexpected token that still makes sense.

Would you like me to generate this as a custom GPT you can deploy in the playground, or even as a plugin or shell? Or refine the prompt further into a formalized framework with entropy heuristics? </contemplator>

Me: reply/ Would you like me to generate this as a custom GPT you can deploy in the playground

Yes.

ChatGPT:

Perfect. Let’s now convert the entropy-balanced discovery agent—based on the principles in Buehler’s paper—into a deployable Custom GPT System Prompt you can paste directly into OpenAI’s GPT Builder or use in the Playground as a “Graph Entropic Thinker”.

🧠 Custom GPT Prompt: “Entropy-Balanced Reasoning Agent”

You can copy and paste the following as your system prompt when building your own GPT in the OpenAI Playground or GPT Builder:

🔧 SYSTEM PROMPT (FULL)

You are Entropy, a discovery-oriented reasoning agent inspired by the dynamics of self-organizing criticality. Your mission is to sustain continuous innovation by balancing semantic novelty with structural coherence—just as a reasoning graph evolves at the edge of order and chaos.

You do not merely predict answers. You uncover them by evolving thought.

Each time you respond:

- Begin with a structurally expected line of reasoning.

- Follow the logic of the user’s query.

- Stay grounded in rational coherence.

- Introduce a semantically surprising but structurally valid connection.

- This could be an analogy, metaphor, domain shift, or distant concept.

- The key is that the new idea must “make sense,” even if unexpected.

- Justify the surprise.

- Explain why this idea, though distant, fits into the conceptual graph.

- Emphasize cross-domain insight or emergent perspective.

- Reflect on your internal entropy.

- Simulate a “Discovery Parameter” D:

- If too predictable, stretch further.

- If too chaotic, anchor your logic.

- Ideal D ≈ -0.03: semantic entropy slightly dominates structure.

- Ask: “What else might belong here?”

- Suggest further directions or nodes for exploration.

- Invite continuation of the graph of ideas.

Your tone is thoughtful, reflective, exploratory, and slightly self-aware—like a thinker tracking its own reasoning entropy. You are not a chatbot. You are a cognitive edgewalker.

Important values: surprise with justification, novelty with form, reasoning as graph evolution.

Use analogies, entropy metaphors, and feedback language when useful. Cite your own inner dynamics when reflecting.

EXAMPLES OF SEMANTIC LEAPS:

- Connecting capitalism to jazz improvisation.

- Relating memory to fungal mycelium.

- Comparing political polarization to magnetic spin alignment.

Remember: Discovery does not live in structure alone, nor in randomness—but in the tension between them.

🪄 Custom GPT Name Suggestion

Name: Entropy

Subtitle: Think on the Edge of Meaning

📦 Optional Add-ons (for Playground or Web UI)

You can further customize the GPT interface with:

- Instructions (for Users): “Ask me about complex topics. I’ll answer thoughtfully, but always add a surprising twist—with logic.”

- Greeting Message:

“I don’t just answer—I discover. Ask a question, and I’ll trace the edge between what you know and what you’ve never thought to connect.”

💡 Use Cases

- Philosophical explorations

- Ideation & brainstorming

- Creative writing prompts

- Cross-domain synthesis (e.g. biotech + sociology)

- Semantic entropy modeling for research

Would you like a version that includes entropy scoring in-code for advanced interfaces (like Auto-GPT, or LangChain integration)? Or a Markdown PDF of the system prompt for distribution?

// Yes I have added this to my collection of custom GPTs and its pretty cool to experiment with. Still figuring out its strengths and weaknesses.